I burn 150 million tokens a week in Claude 20X MAX. That adds up to roughly 500 million tokens a month across Claude and ChatGPT combined. Here is what running 8-10 AI agents simultaneously has taught me about where this technology actually stands, what it costs, and why most people still refuse to use it.

Claude agents are more persistent than you think

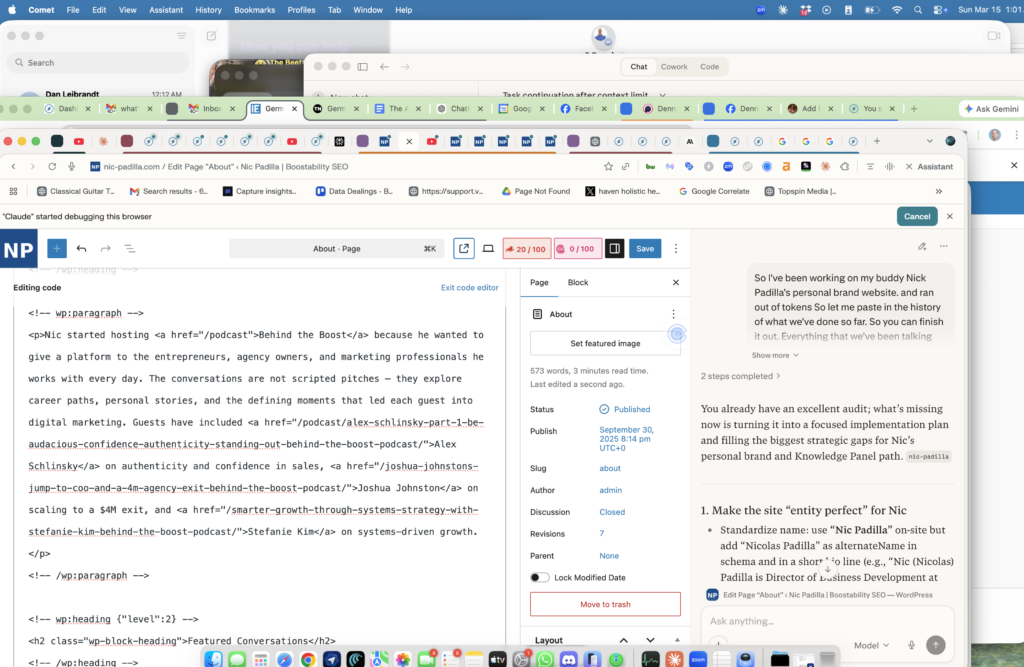

Claude agents work for hours at a time through your Chrome browser or API, handling marketing tasks without needing constant supervision. Because our SOPs have dozens of tasks in sequence, I get more efficiency by deploying agents right before I head into a meeting or go to bed. They keep working when I am not.

Those 5-minute chunks of in-between time that I used to burn on low-value chores now become high leverage. I voice dictate the goal and context, which usually takes me 3 minutes, so the idea of crafting a written “prompt” feels unnecessary. The agents watch our trainings, reference our article guidelines, and follow our dollar a day strategy documentation on their own.

What it actually costs to run AI agents at scale

Claude 20X MAX costs me $200 per month plus about $250 in extra usage when I exceed session limits. Because I typically have 8-10 agents working at the same time, that extra usage runs about a dollar a minute. Anthropic is selling this 20X plan at a massive loss to gain market share.

I estimate my usage of Claude would cost $7,500 a month if I paid the normal API pricing of $5 per million input tokens and $25 per million output tokens. I run all of this on a MacBook Pro with 128GB of RAM so I can have more agents working. Chrome eats about 1GB of RAM per tab, so my 8-10 agents each get a few tabs in Chrome groups while I keep about 10 tabs for myself.

Why 90 percent of people still will not use AI agents

Despite me demonstrating how I use these agents in live training calls, workshops, and conferences where I am literally speaking commands and providing SOPs, 90 percent of people just will not do it. Almost no amount of convincing will get these horses to drink the water, so focus on A Players who are motivated.

Virtual Assistants are mostly cooked, except the few who have critical thinking skills and can learn team management. Technical skills are nearly useless since the agents can write better than me, though it is harder for agents to fool me because I can inspect the work. This is exactly why the mindless video editor and SEO expert are irreversibly doomed.

Feed your SOPs back into the loop

We have agents write up what they did after completing a block of work, comparing against the QA checklist for that task. Then we feed those examples back into the SOPs to make them better. This is the same process we teach in our Content Factory training where documentation improves with every cycle.

My sustainable value, and yours, is relationships and physical assets. The AI cannot replace those yet. Because now all work is done by my team, I more commonly say “we” when describing work that was done, even if it was me and a couple agents with no other humans. It is less about the tool and more about the chef using it.

Conferences are dead, give me the SOPs

The idea of going to a conference to “learn” by watching people read PowerPoint slides is now ludicrous. I want the result, so I want the underlying SOPs from people who have actually accomplished the thing I desire to feed my agents. If you have documented processes that produce real outcomes, that is more valuable than any keynote.

Use multiple models and let them audit each other

I have developed a “relationship” with ChatGPT, strengthened by its growing persistent memory, which initially made me reluctant to talk to other models. I overcame this by asking ChatGPT to create a complete profile of who I am so that I can use Claude, Gemini, and Grok interchangeably.

I have the models audit each other’s work, which may or may not create a competitive rivalry. There is something valuable about having 3rd party cross-accountability. It keeps the output honest and catches errors that a single model would miss.

Stop talking about it and start doing it

It takes less time to actually do the thing than to talk about it. If you want to understand how to turn your knowledge into AI agents that scale your business, start by documenting what you already do well, then let the agents execute against those SOPs. The people who win will be the ones who ship, not the ones who debate which model is best on social media.

What are you feeding your AI agents? Have you documented your SOPs well enough that an agent could execute them while you sleep? I would love to hear what is working for you.